The Road to Responsible AI: Salesforce and the Ethics of Einstein GPT

Jenna Trott

Jenna Trott

5 min read | JUNE 13, 2023

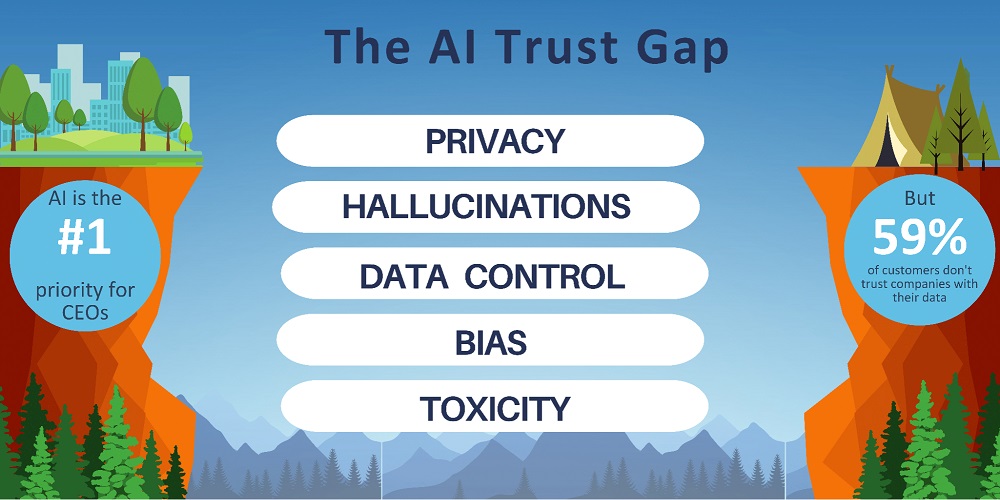

If you’ve been on planet Earth in the last eighteen months, then you know the meteoric presence that two phrases, “artificial intelligence” and “generative AI” have had. Yesterday marked a significant milestone as Salesforce unveiled Salesforce AI Day, a momentous event filled with groundbreaking announcements, captivating live demos, and enlightening discussions. Both in-person and virtual attendees had the privilege of witnessing Marc Benioff, Salesforce’s CEO, and Co-Founder, engaging in thought-provoking conversations with a multitude of AI visionaries, delving into the boundless possibilities that lie ahead in the realm of enterprise-generative AI. One of the biggest takeaways from the event revolved around the notion of an “AI Trust Gap”, highlighting the disconcerting reality where CEOs cite AI as their top priority, while an overwhelming majority of customers harbor deep reservations about entrusting their data to companies. It begs the question: how can Salesforce accelerate companies’ productivity with generative AI without sacrificing human rights and data? Marc Benioff and a team of esteemed Salesforce AI experts sat down to answer this very question. Weren’t able to catch it live? No worries, we’ll break down some of the critical points about the future of enterprise-generative AI and how Salesforce plans on prioritizing trust below.

Core Values: Trust as the Cornerstone

Salesforce has long since established trust as being paramount to their mission. Through unwavering commitment to transparency, security, compliance, privacy, and performance, Salesforce earns the trust of those they serve and they plan to extend this same principle to the world of generative AI as well. Spearheading this effort is Kathy Baxter, Principal Architect, Ethical AI Practice at Salesforce, who assuages the masses that every conversation regarding AI begins and ends with trust at its forefront. Kathy remarks that customer data “is not [Salesforce’s] product”, which is why the Ohana has created five priorities when it comes to moving forward into the next frontier of AI:

- Accuracy

- Safety

- Transparency

- Empowerment

- Sustainability

What Makes Einstein GPT Different?

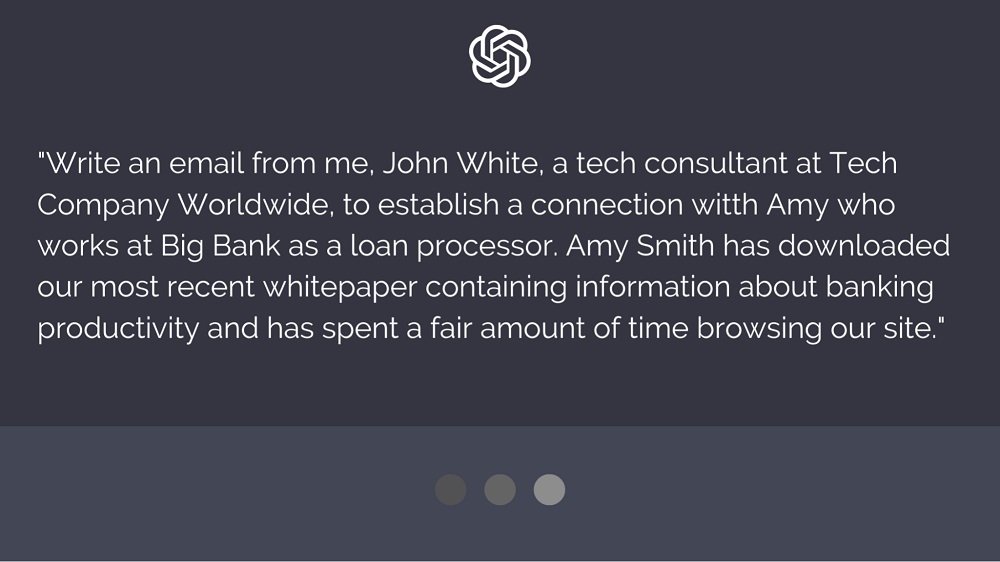

Public large learning models (LLM) offer the advantage of swiftly generating eloquent email content, however, they encounter limitations when it comes to personalization. This raises a crucial question: Why can’t companies just input prompts with specific information into a large language model to tailor messages to individual clients? This seemingly simple solution, however, presents a collosal ethical concern. Patrick Stokes, EVP & GM, Platform at Salesforce, illustrates this issue by providing an example.

In Patrick’s scenario, the prompt for a generative AI public model includes specific details about himself, his role, the client’s identity and role at a particular company, as well as whitepapers they’ve recently downloaded. The resulting email generated by the model appears flawless and can be conveniently copied and pasted for immediate use. However, a profound problem emerges: All of the customer data has been included in the prompt, leading to the dissemination of sensitive personally identifiable information. This situation underscores the gravity of the ethical implications at hand. This is where Einstein GPT comes in.

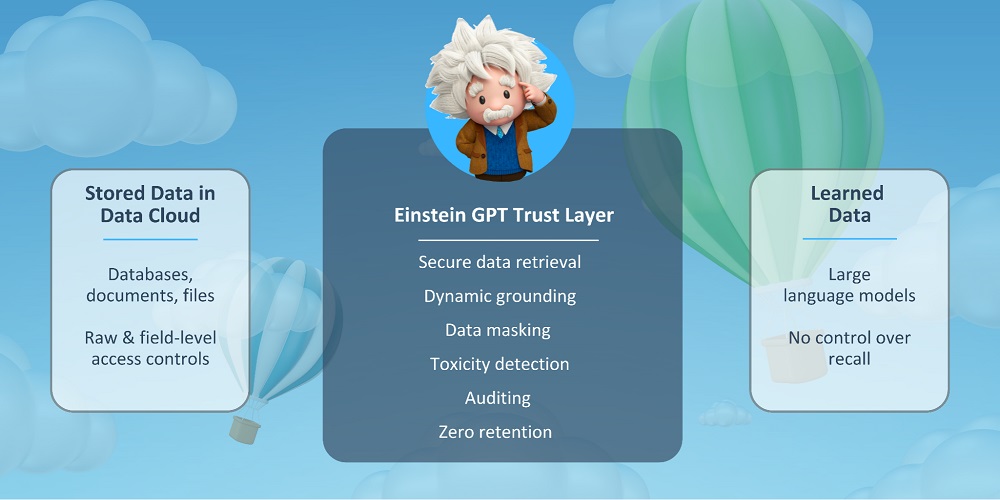

Referring back to Patrick’s initial scenario, Einstein GPT utilizes data masking to ensure that when information is input, sensitive data is safeguarded through the substitution of realistic (yet fabricated) alternatives for organizational data. The goal is to protect sensitive information while offering a viable alternative when real data is unnecessary. Thus, Salesforce users can create highly curated messaging for clients without ever leaving Salesforce, and agents can rest assured that sensitive client data is handled appropriately and ethically.

Einstein GPT’s “Trust Layer”

Okay, so, we get it – Salesforce is prioritizing trust and transparency to promote the ethical use of data in enterprise generative AI – but how is that achieved?

Patrick Stokes notes that one of the most frequently asked questions is: how can we trust AI? Companies, especially those entrusted with highly sensitive information, understandably have valid concerns regarding the storage and handling of data once it is input into a large language model (LLM). Where does it go? Where does it live? To answer this question, Patrick asks us one: what is an apple? Well, an apple is a fruit, they can be red, sometimes green – and they grow on trees. Patrick curiously inquires about the whereabouts of the information stored in our brains that enables us to recognize an apple. Silence. The crux of his argument is that learned data is very different from the data we store in clouds that can be secured by leveraging user access controls. Rather than being an innate knowledge, our ability to recognize the concept of an apple is a learned principle acquired through interactions with specific environmental cues. This allows us to identify an apple, even though they may not all possess identical physical attributes.

Generative AI works in the same way – it’s a a deep learning process. The ability of models are ever-expanding and weights are consistently changing. Salesforce understands this burden of responsibility and offers us a solution with Einstein GPT’s “Trust Layer”:

- Secure data retrieval

- Dynamic grounding

- Data masking

- Toxcity detection

- Auditing

- Zero retention

Before the final message reaches the intended recipient, it undergoes thorough testing for toxicity or any potentially unhelpful or inaccurate information. This step ensures that the content meets the highest standards of quality. The entire process adheres to a stringent audit trail, maintaining accountability and transparency.

And the best part? Einstein GPT offers zero retention of personally identifiable customer data (PPI). This ensures that all customer data is handled with the utmost responsibility, privacy, and ethical consideration.

Looking Forward

Salesforce’s groundbreaking launch of predictive Einstein in 2014 marked a significant milestone, cementing the start of their transformative AI journey. With a commitment to progress, Salesforce aims to unlock extraordinary experiences that were once considered out of reach, all while ensuring that no business is left behind.

Recognizing the limitations of existing GPT models, many of which are outdated by a year, Salesforce strives for a higher standard. They understand that staying up-to-date with real-time information is imperative. Hence, Salesforce’s Einstein GPT is designed to empower businesses to forge unparalleled connections with customers, revolutionizing the way they interact and achieving what was previously deemed impossible.

Here at AGG, we eagerly await the future of enterprise generative AI, and are excited to witness the remarkable advancements Salesforce will bring to this new frontier.

Get the latest Salesforce news

Subscribe to get the latest Salesforce blogs, guides, industry reports, events, and all things Salesforce related!

FREE Salesforce Assessment!

To demonstrate confidence in our ability as Salesforce Partners, we’re offering you the a FREE Salesforce Organizational Assessment.

Let's Get Started on Your Salesforce Project!

Salesforce

PLATINUM

PARTNER

Salesforce

APPEXCHANGE

G2

USER REVIEWS